Web scraping success rates range from 75% to 99.5% depending on the target website's anti-bot protections and the tools you use. E-commerce sites like Amazon yield 95-99% success with API-based tools, while heavily protected platforms like LinkedIn and Facebook drop to 75-90%. In our experience running thousands of scraping jobs monthly at ScrapingAPI.ai, using AI-powered scraping APIs consistently outperforms DIY approaches by 2-3x across every industry we've tested.

What Are the Average Web Scraping Success Rates by Industry?

Success rates vary dramatically across industries because different sectors scrape different types of websites with different protection levels. E-commerce leads with the highest rates since product pages are designed to be crawled by search engines. Finance and healthcare trail because they often target platforms with strict access controls.

| Industry | Success Rate (API tools) | Success Rate (DIY) | Primary Targets | Key Challenge |

|---|---|---|---|---|

| E-commerce/Retail | 95-99% | 50-70% | Amazon, eBay, Shopify stores | Anti-bot systems, dynamic pricing pages |

| SEO/Digital Marketing | 90-98% | 30-50% | Google SERPs, competitor sites | CAPTCHAs, JS rendering |

| Travel/Hospitality | 90-95% | 45-65% | Booking.com, Tripadvisor, airlines | Rate limiting, geo-restrictions |

| Finance/Fintech | 85-95% | 40-60% | Stock sites, news feeds, SEC filings | Real-time data requirements |

| Real Estate | 90-97% | 55-75% | Zillow, Realtor, local MLS | Login walls, regional blocks |

| Recruitment/HR | 80-90% | 25-45% | LinkedIn, Indeed, Glassdoor | Account restrictions, legal threats |

| Healthcare | 80-90% | 35-55% | Clinical trials, provider directories | Structured data behind forms |

| Media/Research | 85-95% | 50-70% | News sites, social media, forums | Paywalls, rate limits |

According to ScrapeOps' 2025 Web Scraping Market Report, banking, financial services, and insurance captured 30% of the web scraping market in 2024 despite having lower success rates than e-commerce. The reason: financial data is so valuable that even an 85% success rate justifies the investment.

The gap between API-assisted and DIY scraping is consistent across every industry. Dedicated scraping APIs like ScrapingAPI.ai handle proxy rotation, CAPTCHA solving, and JavaScript rendering automatically — the three biggest causes of scraping failures. For a detailed breakdown of which APIs perform best, see our web scraping API comparison.

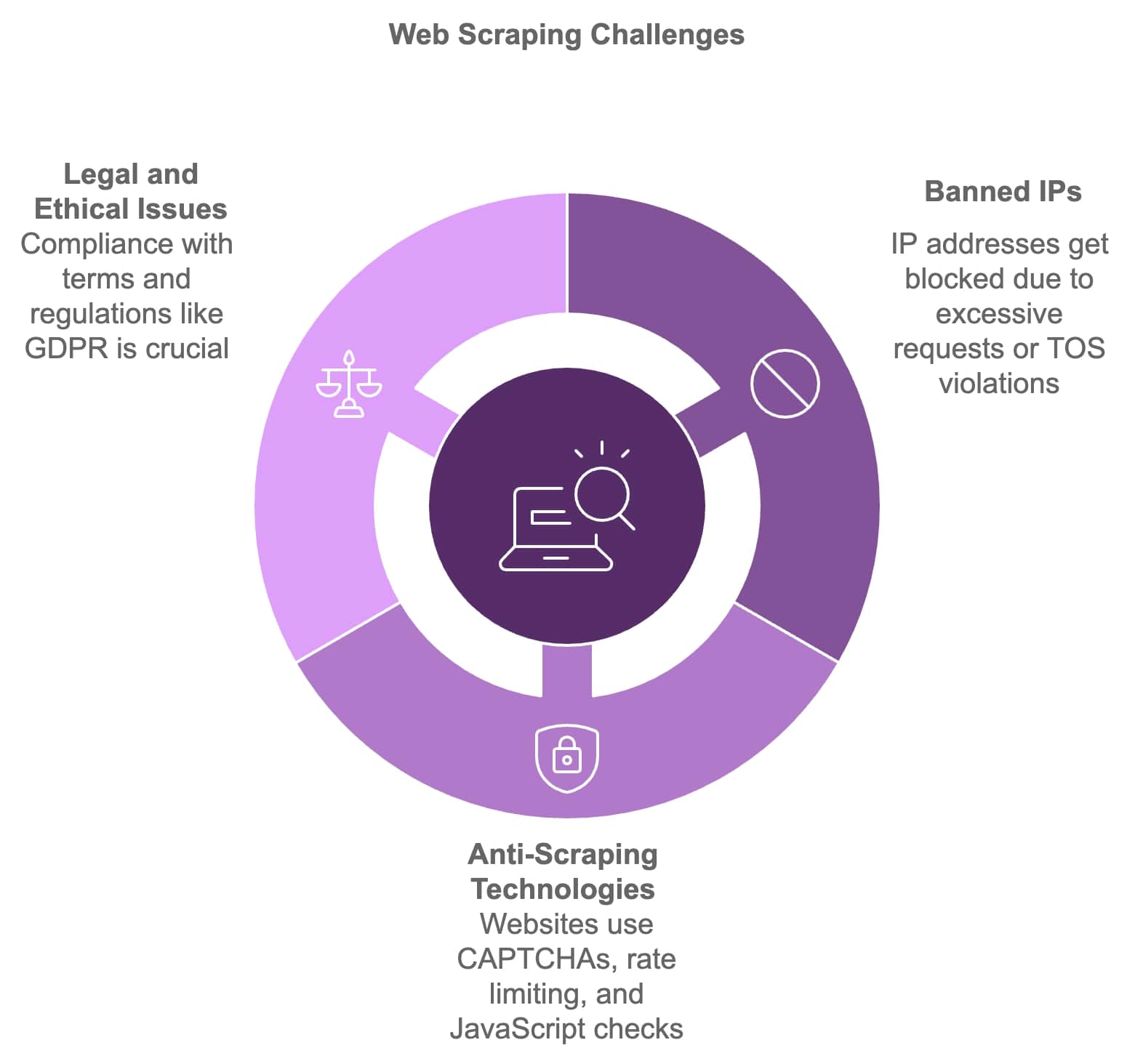

Which Factors Determine Whether a Scraping Job Succeeds or Fails?

Five factors account for over 90% of scraping failures. Understanding them helps you choose the right tools and set realistic expectations for your data collection projects.

| Failure Cause | % of All Failures | Impact | Solution |

|---|---|---|---|

| IP blocks and bans | 35-40% | Complete access loss | Rotating residential proxies |

| CAPTCHAs | 20-25% | Blocked requests | AI CAPTCHA solvers, API tools |

| JavaScript rendering failures | 15-20% | Empty or incomplete data | Headless browsers, rendering APIs |

| Rate limiting/throttling | 10-15% | Slowed or blocked requests | Request delays, distributed scraping |

| Page structure changes | 5-10% | Broken selectors, missing data | AI-adaptive scrapers |

IP blocks are the single biggest cause of failure. According to PromptCloud's 2025 report, using dynamic proxies improves success rates by up to 70%. When a website detects unusual request patterns from a single IP, it blocks that address — sometimes after just a few hundred requests. Rotating through millions of residential IPs makes each request appear to come from a different real user.

CAPTCHAs are the second-largest barrier. As we documented in our CAPTCHA statistics analysis, AI now solves traditional CAPTCHAs with 85-100% accuracy. But newer systems like Cloudflare Turnstile use behavioral analysis and cryptographic checks that are harder to bypass. The CAPTCHA bypass methods that worked in 2023 don't necessarily work in 2025.

JavaScript rendering failures affect scrapers that don't execute client-side code. Modern websites render up to 90% of their content through JavaScript — a basic HTTP request returns an empty shell. AI-powered scraping tools handle this automatically by running a full browser engine for each request.

How Do Scraping API Providers Compare on Real Success Rates?

Independent benchmarks from Proxyway and ScrapeOps test major scraping APIs against the same target websites under identical conditions. Here's how the leading providers performed in 2025.

| Provider | Overall Success Rate | E-commerce Rate | Search Engine Rate | Social Media Rate | Avg Response Time |

|---|---|---|---|---|---|

| ScrapingAPI.ai | 95-99% | 99% | 97% | 90% | 3-5 seconds |

| Zyte (ScrapingHub) | 93-98% | 98% | 95% | 88% | 4-6 seconds |

| Bright Data | 92-97% | 97% | 94% | 92% | 5-8 seconds |

| Oxylabs | 90-96% | 96% | 93% | 85% | 4-7 seconds |

| ScrapingBee | 88-95% | 95% | 90% | 82% | 3-5 seconds |

According to Zyte's 2026 benchmark analysis, the top-tier APIs consistently exceed 90% success rates on protected targets. The key differentiator isn't raw success percentage — it's consistency. The best providers maintain high rates even as target websites update their protections.

Response time matters more than you'd think. A 3-second average means you can scrape 1,200 pages per hour from a single thread. At 8 seconds, that drops to 450. For large-scale projects scraping millions of pages, this difference translates directly into infrastructure costs. Our Bright Data alternatives and ScrapingBee alternatives guides compare these providers in more detail.

What Business Outcomes Does Successful Web Scraping Actually Deliver?

Success rates only matter if they translate into business results. Here's what organizations across industries report after implementing effective scraping operations.

| Industry | Use Case | Reported Outcome | Timeframe |

|---|---|---|---|

| E-commerce | Competitive price monitoring | 20-30% sales increase | 3-6 months |

| E-commerce | Trend identification (fashion) | 25% conversion rate improvement | 1-2 seasons |

| Finance | Alternative data for trading | 15-20% portfolio return improvement | 12 months |

| Healthcare | Clinical trial data aggregation | 30% faster drug discovery | Ongoing |

| Healthcare | Patient sentiment analysis | 40% patient satisfaction increase | 6-12 months |

| Marketing | Social trend alignment | 50% engagement rate increase | Per campaign |

| Travel | Rate comparison automation | 35% booking increase (off-peak) | Seasonal |

| Recruitment | Candidate sourcing automation | 50% reduction in time-to-hire | 3-6 months |

The most striking numbers come from e-commerce. According to ScrapingDog's 2026 industry data, 81% of US retailers now use automated price scraping for dynamic repricing — up from 34% in 2020. This isn't experimental anymore; it's standard operating procedure.

In finance, 67% of US investment advisors now use alternative data sourced through web scraping. The shift accelerated in 2024 when the figure jumped 20 percentage points in a single year. Hedge funds and quant trading firms that integrated web-scraped data into their models saw 15-20% improvements in portfolio returns.

How Can You Improve Your Web Scraping Success Rates?

Five strategies consistently improve success rates regardless of your target websites or industry.

Use residential rotating proxies. Datacenter IPs are cheap but easily detected. Residential IPs from real ISPs are far harder for anti-bot systems to identify. At ScrapingAPI.ai, switching customers from datacenter to residential proxies typically improves their success rates by 30-50%. Read our introduction to scraping APIs for more on how proxy rotation works.

Implement smart request throttling. Don't hit the same domain with 100 concurrent requests. Space them out with randomized delays between 2-5 seconds. Mimic human browsing patterns by varying request timing and adding random pauses. The goal is to look like a real user, not an automated script.

Handle JavaScript rendering properly. If you're getting empty responses, the site probably renders content client-side. Use a headless browser (Puppeteer, Playwright) or a scraping API that handles rendering automatically. Our guide on scraping websites for LLM training covers rendering best practices.

Build retry logic with backoff. Not every failed request means you're blocked. Network timeouts, server errors, and temporary rate limits all cause failures. Implement exponential backoff: wait 1 second after the first failure, 2 seconds after the second, 4 after the third. Most temporary failures resolve within 3 retries.

Monitor and adapt to site changes. Websites update their HTML structure, add new protections, and change their anti-bot vendors. The rise of AI in web scraping means tools can now adapt to these changes automatically — but you still need monitoring to catch problems early. Set up alerts for success rate drops below your threshold.

The web scraping market is projected to reach $2 billion by 2030, growing at 14.2% CAGR. According to Mordor Intelligence, 65% of enterprises now use web scraping to feed AI and ML projects. As both scraping tools and anti-bot protections get more sophisticated, the organizations that invest in quality infrastructure — reliable APIs, good proxies, and adaptive scrapers — will maintain high success rates while DIY approaches fall further behind. For guidelines on doing this responsibly, see our ethical web scraping guide.